Data Fusion Services

Seamless Data Integration & Insights

DataMesh FactVerse Data Fusion Services unifies data from multiple sources — such as IoT sensors, enterprise systems, and operational logs — into a single digital environment within FactVerse. By eliminating data silos and providing analytics and ML platform integration, Data Fusion Services accelerates decision-making, supports continuous optimization, and empowers businesses to harness the full potential of their digital twin strategies.

Key Capabilities

Core building blocks that define how this page delivers operational value.

- Expanding Connector Options, One Pipeline

Connect via REST API, MQTT, OPC UA, BACnet, Modbus, JDBC, CSV upload, Microsoft Fabric, and pre-built adapters for Siemens, Honeywell, Kepware, PI, and Azure IoT Hub. Ingest data in minutes with no custom middleware.

- AI Auto-Map to Digital Twin Models

AI automatically maps raw sensor tags and data fields to digital twin entities — no manual schema mapping required. The system recognizes naming patterns, unit types, and hierarchies to create accurate twin bindings on first import.

- Growing Data Transformation Template Library

Pre-built templates for common industrial scenarios: HVAC performance scoring, energy benchmarking, OEE calculation, alarm correlation, SPC charting, and more. Customize or clone templates to match your specific KPIs.

- Data Cleansing & Quality Engine

Automated outlier detection, gap interpolation, unit normalization, and timestamp alignment across heterogeneous sources. Data quality scores are tracked per-source so you always know which feeds need attention.

- ML-Ready Data Mart

Cleansed, normalized data is stored in a centralized Data Mart optimized for direct consumption by ML/AI frameworks, BI dashboards, and the FactVerse AI Agent. No ETL pipelines to build — data flows from ingestion to model training automatically.

- Real-Time Twin Binding

Live sensor values stream directly into 3D twin scenes created in FactVerse Designer. Equipment color, state, and animation update in real time — so operators see the facility as it is, not as it was in a stale report.

Overview

DataMesh FactVerse Data Fusion Services unifies data from multiple sources — such as IoT sensors, enterprise systems, and operational logs — into a single digital environment within FactVerse. By eliminating data silos and providing analytics and ML platform integration, Data Fusion Services accelerates decision-making, supports continuous optimization, and empowers businesses to harness the full potential of their digital twin strategies.

Data Fusion Services Modules

| Module | Function |

|---|---|

| Data Ingestion | Connect to data sources via MQTT, OPC UA, HTTP, REST APIs |

| Data Mapping | Map raw data to digital twin entities automatically |

| Data Cleansing | Remove inaccuracies and ensure data quality |

| Data Computation | Transform and compute derived metrics |

| Data Mart | Centralized storage optimized for ML, AI, and BI tools |

| Visualization | Intuitive dashboards and reports |

Typical Outcomes

Teams use this workflow to validate operational value through a focused pilot: better visibility, more consistent execution, cleaner records, faster handoffs, and clearer decision evidence. Exact impact depends on site scope, data readiness, workflow maturity, and rollout depth.

Frequently Asked Questions

How do I implement Data Fusion Services?

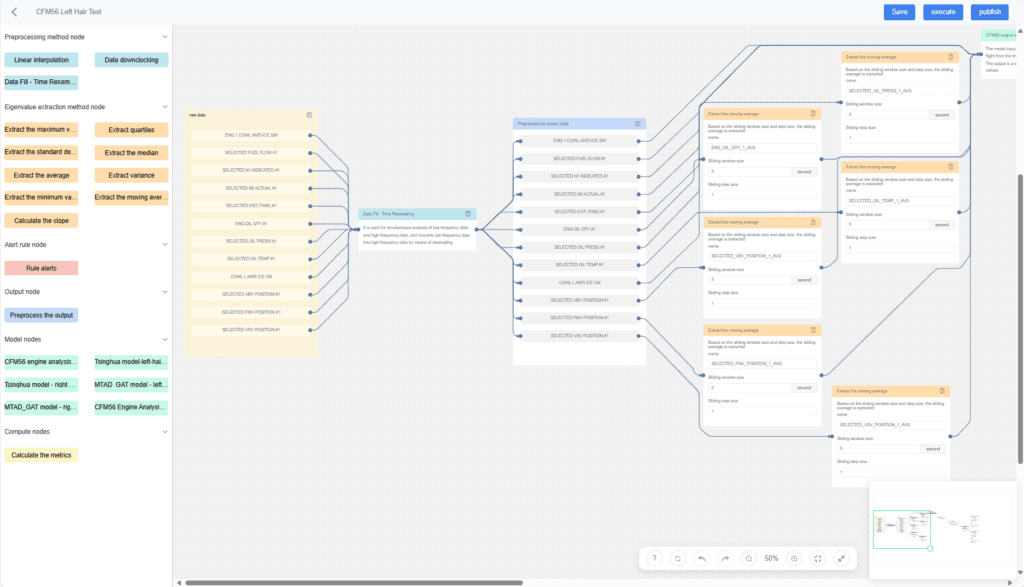

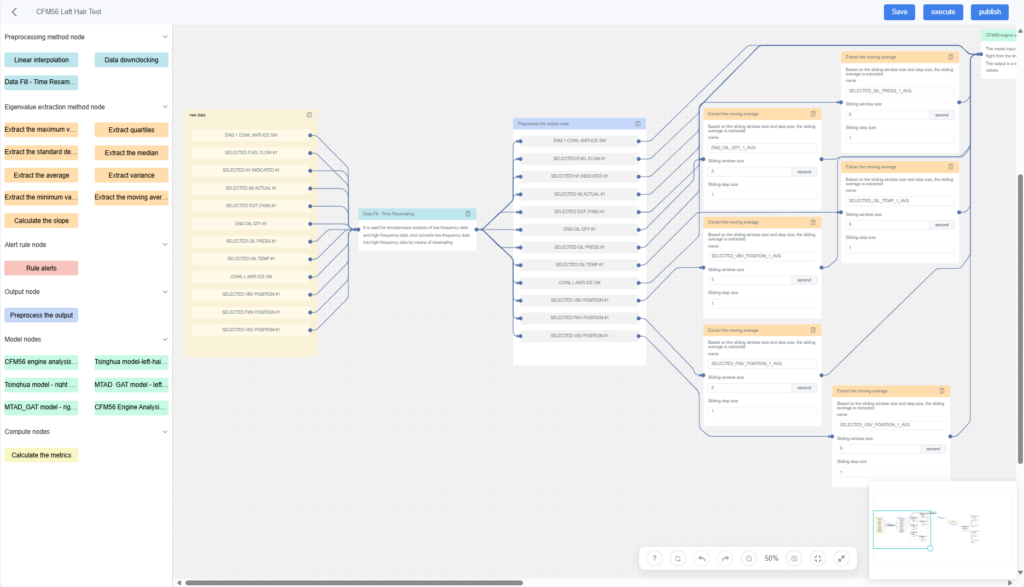

Start by defining clear objectives (streamlining real-time data usage or accelerating ML initiatives). Assess existing data sources and identify protocols (MQTT, OPC UA, etc.) for data ingestion. Work with DataMesh or a certified partner to configure Data Fusion Services modules — Data Ingestion, Mapping, Cleansing, Computation, Data Mart, and Visualization. Conduct a pilot then roll out organization-wide with training and optimization.

What is the licensing model?

Data Fusion Services follows a license and services model: (1) Node/Server License covers on-premises or private-cloud deployment to host the Data Fusion Services environment and manage data processing tasks. (2) Optional Service Fees include customization or integration services for specific use cases or advanced AI/ML configurations.

How does Data Fusion Services integrate with existing systems?

The Data Ingestion module uses standard protocols (MQTT, OPC UA, HTTP, etc.) to pull data from MES, ERP, and other systems. REST APIs or flat-file inputs also supported. Once ingested, Data Fusion Services automatically cleanses and maps data to digital twin entities, creating a unified dataset accessible for analysis, simulation, or AI/ML processing.

What hosting platform is recommended?

Data Fusion Services recommends using Microsoft Azure as the platform hosting services.

Related Solutions

See how this product powers real-world use cases.